RESEARCH

Marcello Ienca

A New Phase of BMI Research

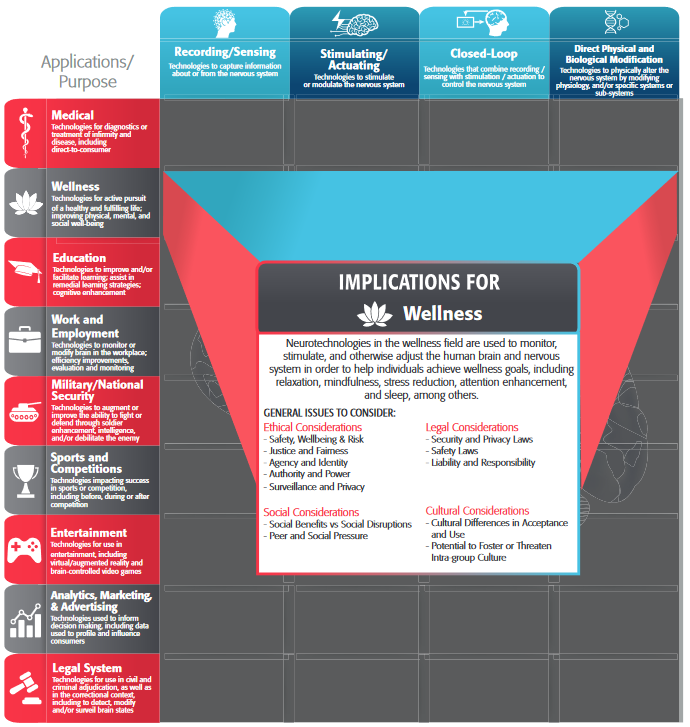

Progress in neurotechnology is critical to improve our understanding of the human brain and improve the delivery of neurorehabilitation and mental health services at the global level. We are now entering a new phase of neurotechnology development characterized by higher and more systematic public funding (e.g. through the US BRAIN Initiative, the EU supported Human Brain Project or the government-initiated China Brain project), diversified private sector investment (among many others, through neuro-focused companies like Kernel and Neuralink), and increased availability of non-clinical neurodevices. Meanwhile, advances at the interplay between neuroscience and artificial intelligence (AI) are rapidly augmenting the computational resources of neurodevices. As neurotechnology becomes more socially pervasive and computationally powerful, several experts have called for preparing the ethical terrain and charting a route ahead for science and policy [1-3].

Ethical Challenges

Within the neurotechnology spectrum, brain-machine interfaces (BMIs) are of particular relevance from a social and ethical perspective, as their capacity to establish a direct connection pathway between human neural processing and artificial computation has been described as “qualitatively different” by experts [1], hence believed to raise “unique concerns” [2].

Among these concerns, privacy is paramount. The informational richness of brain recordings holds the potential of encoding highly private and sensitive information about individuals, including predictive features of their health status and mental states. Decoding such private information is anticipated to become increasingly easier in the near future due to coordinated advances in sensor capability, spatial resolution of recordings and machine learning techniques for pattern recognition and feature extraction [2].

Three major types of privacy risks seem to be associated with BMIs: incidental disclosure of private information, unintended data leakage and malicious data theft [4]. Given the intimate link between neural recordings, on the one hand, and mental states and predictors of behavior, on the other hand, scholars have argued that privacy challenges raised by BMIs are characterized by greater complexity and ethical sensitivity compared to conventional privacy issues in digital technology and urged for a domain-specific ethical and legal assessment. They have called this domain mental privacy [5].

The increasing use of machine learning and artificial intelligence in BMI has also implications for the notion of agency. For example, researchers have hypothesized that when BMI control is partly reliant on AI components, it might become hard to discern whether the resulting behavioral output is genuinely performed by the user [6], possibly affecting the users’ sense of agency and personal identity. This hypothesis has recently obtained preliminary empirical corroboration [7]. It should be noted, however, that while AI could obfuscate subjective aspects of personal agency, AI-enhanced BMI, considered as a whole, can massively augment the capability of the BMI user to act in a given environment -especially when used for device control by a patient with severe motor impairment.

With the increase in non-clinical uses of BMI, an additional ethical challenge will soon be neuroenhancement. While clinical BMI applications are aimed at restoring function in people with physical or cognitive impairments such as stroke survivors, neuroenhancement applications could, in the near future, produce higher-than-baseline performance among healthy individuals.

Do We Need Neurorights?

As I stated elsewhere [8], the ethical challenges posed by BMIs and other neurotechnologies urge us to address a fundamental societal question: determining whether, or under what conditions, it is legitimate to gain access to, or to interfere with another person’s or one’s own neural activity.

This question needs to be asked at various levels of jurisdiction including research ethics and technology governance. In addition, since neural activity is largely seen as the critical substrate of personhood and legal responsibility, ethicists and lawyers have recently proposed to address this question also at the level of fundamental human rights [5].

A recent comparative analysis on this topic has concluded that existing safeguards and protections might be insufficient to adequately address the specific ethical and legal challenges raised by advances at brain-machine interface [5]. After reviewing international treaties and other human rights instruments, the authors called for the creation of new neuro-specific human rights. In particular, four basic neurorights have been proposed.

First, a right to mental privacy should protect individuals from the three types of privacy risk delineated previously. In its positive connotation, this right should allow individuals to seclude neural information from unconsented access and scrutiny, especially from information processed below the threshold of conscious perception. Authors have argued that individuals might be more vulnerable to breaches for mental privacy compared to other domains of information privacy due to their limited degree of voluntary control of brain signals [5].

Second, a right to psychological continuity might guide the responsible integration of AI in BMI control and preserve people’s sense of agency and personal identity -often defined as the continuity of one’s mental life- from unconsented manipulation. Users of BMIs should retain the right to be in control of their behavior, without experiencing “feelings of loss of control” or even a “rupture” of personal identity [7]. At the same time, the right to psychological continuity is well-suited to protect from unconsented interventions by third parties such as unauthorized neuromodulation. This principle might become particularly important in the context of national security and military research, where personality-altering neuroapplications are currently being tested for warfighter enhancement and other strategic purposes [9].

When the unconsented manipulation of neural activity results in physical or psychological harm to the user, a right to mental integrity might be enforced. This right is already recognized by international law (Article 3 of the EU’s Charter of Fundamental Rights) but is codified as a general right to access mental health services, with no specific provision about the use or misuse of neurotechnology. Therefore, a reconceptualization of this basic right should aim not only at protecting from mental illness but also at demarcating the domain of legitimate manipulation of neural processing.

Finally, a right to cognitive liberty should protect the fundamental freedom of individuals to make free and competent decisions regarding the use of BMIs and other neurotechnologies. Based on this principle, competent adults should be free to use BMIs for both clinical or neuroenhancement purposes as long as they do not infringe other people’s liberties. At the same time, they should have the right to refuse coercive applications, including implicitly coercive ones [10].

Uncertainty Ahead

This proposal for creating neuro-specific rights has been recently endorsed by impact analysis experts [11] and leading neuroscientists and neurotechnology researchers [1], and earlier this year, has been echoed by “A Proposal for a Universal Declaration on Neuroscience and Human Rights” to the UNESCO Chair of Bioethics [12].

However, many questions still need to be addressed. First, it remains an open question whether neurorights should be seen as brand new legal provisions or rather as evolutionary interpretations of existing human rights. Similarly, it is unclear who should be the actor to which neurorights are ascribed, if the brain itself, as it was recently proposed [11], or the whole individual person. Finally, grey zones of legal and ethical provision should be further explored. For example, while a right to cognitive liberty might protect the free choices of competent adults, it is questionable whether parents should have the right to neuroenhance their children or whether family representatives should have the right to refuse clinically beneficial neurointerventions to cognitively disabled patients. Addressing these kinds of moral dilemmas will require an open and public debate involving not only scientists and ethicists but also general citizens.

In addition, responsible ethical and legal impact assessments should be based on scientific evidence and realistic time frames, avoiding fear-mongering narratives that might delay scientific innovation and obliterate the benefits of BMI for the population in need.

A Roadmap for Responsible Neuroengineering

When addressing these fundamental questions, ethical evaluations should not be reactive but proactive. Instead of simply reacting to ethical conflicts raised by new products, ethicists have a duty to work together with neuroscientists, neuroengineers, and clinicians to anticipate ethical challenges and promptly develop proactive solutions. A framework for proactive ethical design in neuroengineering has been recently proposed [13] and could applied to various areas of neurotechnology research.

In addition, calibrated policy responses should take into account issues of fairness and equality. BMI should be fairly distributed and should not exacerbate pre-existing socioeconomic inequalities. While access to BMI-mediated health solutions should be as widespread as possible, open-development initiatives including hackathons, open-source platforms (e.g. Open BCI) and citizen-led data-sharing initiatives should be incentivized. In parallel, the growing involvement of for-profit corporations in BMI development urges us to assess the democratic accountability of company-driven technology development. In a not-too-distant future where BMI will likely be widespread, there will be an increasing need to maintain trust in data donation among individual citizens. This could be obtained through clear rules for data collection and secondary use, enhanced data protection infrastructures, public engagement and neurorights enforcement.

References

- Yuste, R., Goering, S., Bi, G., Carmena, J.M., Carter, A., Fins, J.J., Friesen, P., Gallant, J., Huggins, J.E., and Illes, J.: ‘Four ethical priorities for neurotechnologies and AI’, Nature News, 2017, 551, (7679), pp. 159

- Clausen, J., Fetz, E., Donoghue, J., Ushiba, J., Spörhase, U., Chandler, J., Birbaumer, N., and Soekadar, S.R.: ‘Help, hope, and hype: Ethical dimensions of neuroprosthetics’, Science, 2017, 356, (6345), pp. 1338-1339

- Ienca, M.: ‘Neuroprivacy, neurosecurity and brain-hacking: Emerging issues in neural engineering’, in Editor (Ed.)^(Eds.): ‘Book Neuroprivacy, neurosecurity and brain-hacking: Emerging issues in neural engineering’ (Schwabe, 2015, edn.), pp. 51-53

- Ienca, M., and Haselager, P.: ‘Hacking the brain: brain–computer interfacing technology and the ethics of neurosecurity’, Ethics and Information Technology, 2016, 18, (2), pp. 117-129

- Ienca, M., and Andorno, R.: ‘Towards new human rights in the age of neuroscience and neurotechnology’, Life Sciences, Society and Policy, 2017, 13, (1), pp. 5

- Haselager, P.: ‘Did I Do That? Brain–Computer Interfacing and the Sense of Agency’, Minds and Machines, 2013, 23, (3), pp. 405-418

- Gilbert, F., Cook, M., O’Brien, T., and Illes, J.: ‘Embodiment and Estrangement: Results from a First-in-Human “Intelligent BCI” Trial’, Science and Engineering Ethics, 2017

- Ienca, M.: ‘The Right to Cognitive Liberty’, Sci. Am., 2017, 317, (2), pp. 10-10

- Tennison, M.N., and Moreno, J.D.: ‘Neuroscience, Ethics, and National Security: The State of the Art’, PLoS Biol., 2012, 10, (3), pp. e1001289

- Hyman, S.E.: ‘Cognitive enhancement: promises and perils’, Neuron, 2011, 69, (4), pp. 595-598

- Cascio, J.: ‘Do brains need rights?’, New Scientist, 2017, 234, (3130), pp. 24-25

- Pizzetti, F.: ‘A Proposal for a: “Universal Declaration on Neuroscience and Human Rights”’, Bioethical Voices (Newsletter of the UNSESCO Chair of Bioethics), 2017, 6, (10), pp. 3-6

- Ienca, M., Kressig, R.W., Jotterand, F., and Elger, B.: ‘Proactive Ethical Design for Neuroengineering, Assistive and Rehabilitation Technologies: the Cybathlon Lesson’, J. Neuroeng. Rehabil., 2017, 14, pp. 115

About the Author

Marcello Ienca is a Postdoctoral researcher at the Health Ethics & Policy Lab (Dept. of Health Sciences & Technology), ETH Zurich. His research focuses on the ethics of human-machine interaction as well as on responsible innovation in neurotechnology, big-data driven research and artificial intelligence. He was awarded the Pato de Carvalho Prize for Social Responsibility in Neuroscience and the Schotsmans Prize of the European Association of Centres of Medical Ethics (EACME). He is a Board Member of the International Neuroethics Society (INS).

Marcello Ienca is a Postdoctoral researcher at the Health Ethics & Policy Lab (Dept. of Health Sciences & Technology), ETH Zurich. His research focuses on the ethics of human-machine interaction as well as on responsible innovation in neurotechnology, big-data driven research and artificial intelligence. He was awarded the Pato de Carvalho Prize for Social Responsibility in Neuroscience and the Schotsmans Prize of the European Association of Centres of Medical Ethics (EACME). He is a Board Member of the International Neuroethics Society (INS).